Today, we announce the general availability of data preparation authoring in AWS Glue Studio Visual ETL. This is a new no-code data preparation user experience for business users and data analysts with a spreadsheet-style UI that runs data integration jobs at scale on AWS Glue for Spark. The new visual data preparation experience makes it easier for data analysts and data scientists to clean and transform data to prepare it for analytics and machine learning (ML). Within this new experience, you can choose from hundreds of pre-built transformations to automate data preparation tasks, all without the need to write any code.

Business analysts can now collaborate with data engineers to build data integration jobs. Data engineers can use the Glue Studio visual flow-based view to define connections to the data and set the ordering of the data flow process. Business analysts can use the data preparation experience to define the data transformation and output. Additionally, you can import your existing AWS Glue DataBrew data cleansing and preparation “recipes” to the new AWS Glue data preparation experience. This way, you can continue to author them directly in AWS Glue Studio and then scale up recipes to process petabytes of data at the lower price point for AWS Glue jobs.

Visual ETL prerequisites (environment setup)

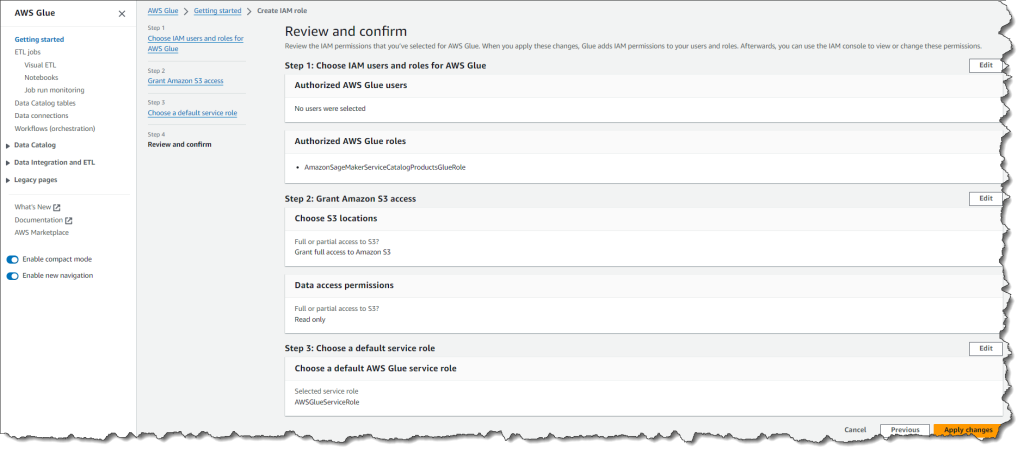

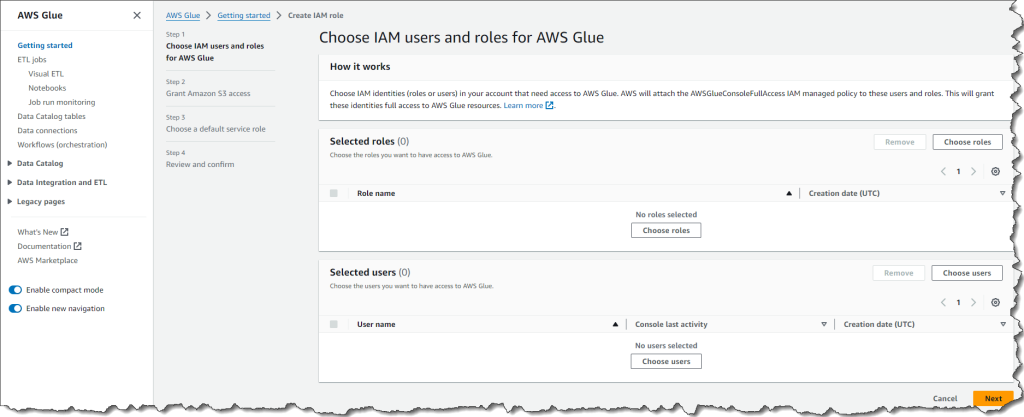

The visual ETL needs an AWSGlueConsoleFullAccess IAM managed policy attached to the users and roles that will access AWS Glue.

This policy grants these users and roles full access to AWS Glue and read access to Amazon Simple Storage Service (Amazon S3) resources.

Advanced visual ETL flows

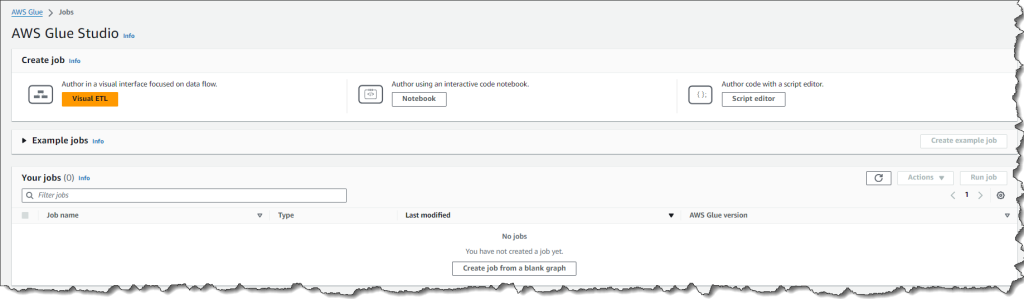

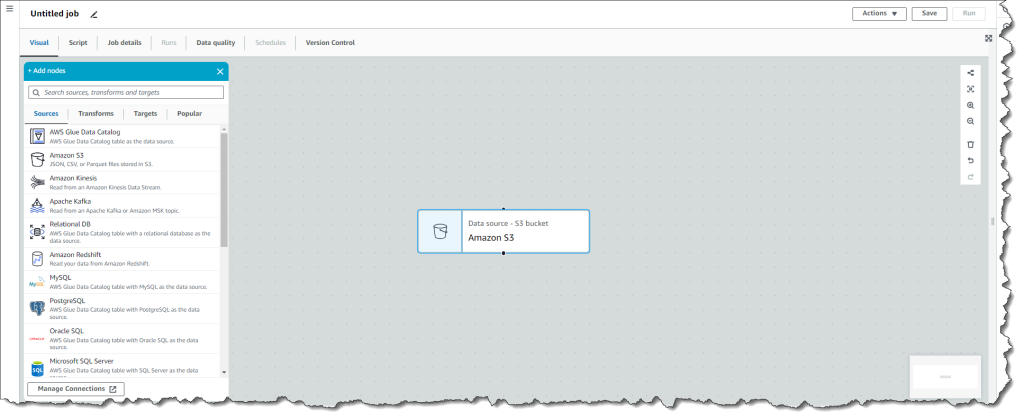

Once the appropriate AWS Identity and Access Management (IAM) role permissions have been defined, author the visual ETL using AWS Glue Studio.

Extract

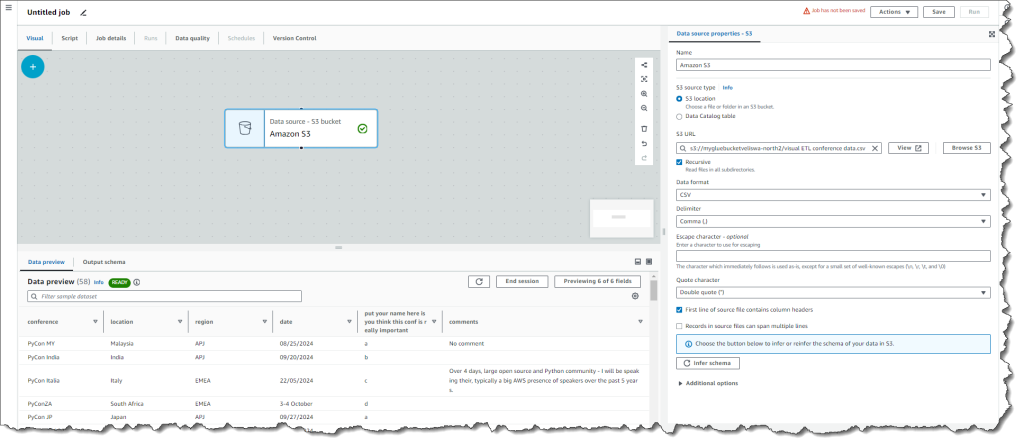

Create an Amazon S3 node by selecting the Amazon S3 node from the list of Sources.

Select the newly created node and browse for an S3 dataset. Once the file has been uploaded successfully, choose Infer schema to configure the source node and the visual interface will show the preview of the data contained in the .csv file.

Earlier I created an S3 bucket in the same Region as the AWS Glue visual ETL and uploaded a .csv file visual ETL conference data.csv containing the data that I will be visualizing.

It’s important to set up the role permissions as detailed in the previous step to grant AWS Glue access to read the S3 bucket. Without performing this step, you’ll get an error that ultimately prevents you from seeing the data preview.

Transform

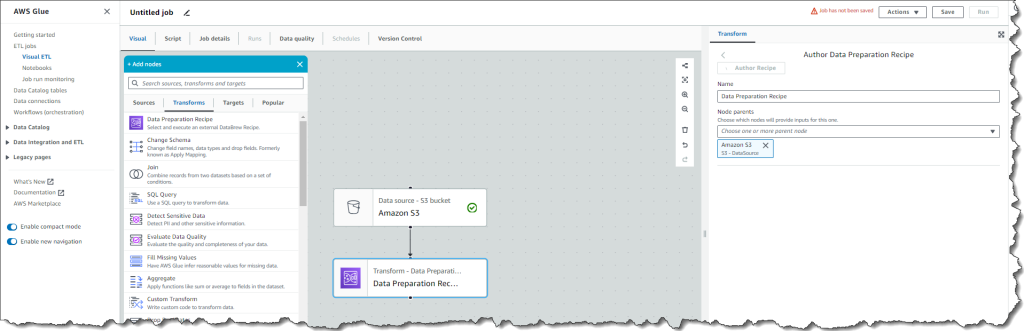

Transform

After the node has been configured, add a Data Preparation Recipe and start a data preview session. Starting this session typically takes about 2 – 3 minutes.

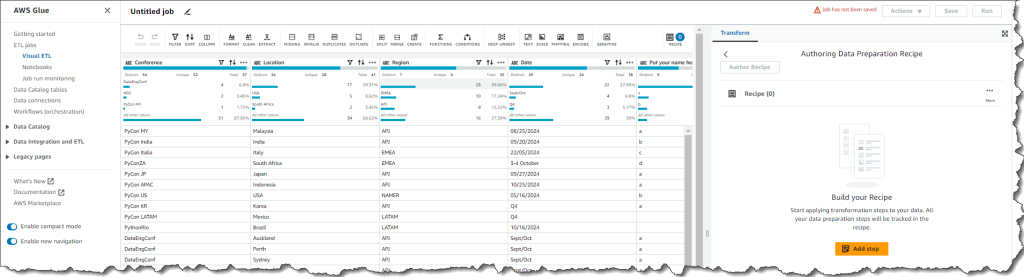

Once the data preview session is ready, choose Author Recipe to start an authoring session and add transformations once the data frame is complete. During the authoring session, you can view the data, apply transformation steps, and view the transformed data interactively. You can undo, redo, and reorder the steps. You can visualize the data type of the column and the statistical properties of each column.

You can start applying transformation steps to your data such as changing formats from lowercase to uppercase, changing the sort order, and more, by choosing Add step. All your data preparation steps will be tracked in the recipe.

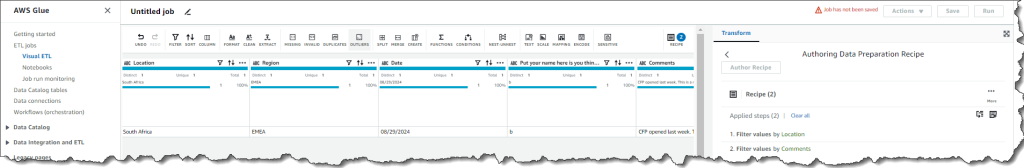

I wanted a view of conferences that will be hosted in South Africa, so I created two recipes to filter by condition where the Location column has values equal to “South Africa”, and the Comments column contains a value.

Load

Once you’ve prepared your data interactively, you can share your work with data engineers who can extend it with more advanced visual ETL flows and custom code to seamlessly integrate it into their production data pipelines.

Now available

The AWS Glue data preparation authoring experience is now publicly available in all commercial AWS Regions where AWS Data Brew is available. To learn more, visit AWS Glue.

For more information, visit the AWS Glue Developer Guide and send feedback to AWS re:Post for AWS Glue or through your usual AWS support contacts.

— Veliswa